Two Keys to Success in the Age of AI

- Yusuf Öç

- 2 days ago

- 10 min read

Updated: 24 hours ago

There is a strange paradox at the heart of the AI age. We have more tools than ever, more content than ever, and more ways to create than ever. Yet the people who stand out are not necessarily the ones with the most tools. They are the ones with the most interesting questions.

AI can now write blog posts, generate images, create code, summarise research, produce presentations, analyse data, and simulate strategic options. Many of these tools are free or widely accessible. The limitation is no longer access to technology. The limitation is how we think.

That changes the rules of marketing and branding.

For years, marketing was often reduced to visibility. Post more. Be seen more. Grow your audience. But in an environment where everyone can produce polished content in seconds, visibility alone is no longer enough.

Generic content has become abundant. Personality, judgement, curiosity, and original voice have become scarce.

Google’s own guidance on AI generated content makes this point indirectly: the issue is not whether content is produced with AI, but whether it is helpful, reliable, and created for people rather than merely produced to manipulate search visibility.

Google also explicitly frames useful content around experience, expertise, authoritativeness, and trust. In other words, the question is not “Did AI help you create this?” The question is “Is there a real human perspective behind it?”

AI is not a miracle. It is a multiplier.

A common mistake is to treat AI as a magic machine that makes everyone equally smart. It does not. AI is closer to a multiplier. If you bring shallow thinking, you often get polished shallowness. If you bring expertise, context, judgement, examples, constraints, and curiosity, you get something much more powerful.

The same tool can produce radically different results depending on the user.

One person writes:

“Write me a blog post about AI and personal branding.”

Another person writes:

“I want to write a blog post arguing that curiosity is becoming the key meta skill in the age of AI. I want to connect this to personal branding, behavioural economics, bounded rationality, cognitive overload, autonomous motivation, tech savviness, and the scarcity of original human voice. Please first help me structure the argument, challenge my assumptions, and suggest what is missing.”

The second prompt is not just longer. It reveals a different mind at work. It shows framing, taste, prior knowledge, intellectual direction, and a willingness to explore.

This is the uncomfortable truth of AI: the quality of the output depends heavily on the quality of the input. And the quality of the input depends on the quality of the human.

What is rare now is not content. It is you.

In a world of abundant content, your competitive advantage is not merely that you can produce. Everyone can produce. Your advantage is how you interpret, connect, question, and express.

Your voice matters because AI trained on generic patterns tends to reproduce generic patterns. It can imitate clarity, structure, and fluency. But it does not automatically know what you have lived, what you have noticed, what you find strange, what you disagree with, what your field has taught you, or what your audience needs to hear now.

That is why original human voice matters more, not less. AI can help turn raw thinking into structured content, but the raw thinking has to come from somewhere. It has to come from your experiences, your expertise, your questions, your taste, and your willingness to go one level deeper.

A few weeks ago I gave a presentation on this topic at The Source Summit at Bayes Business School, London, I framed the paradox as: “Content is abundant, but you are scarce.” AI can generate text, visuals, code, and strategy, but your perspective, experience, and values are what make the brand recognisable.

Curiosity is a meta skill

Curiosity is not just a pleasant personality trait. It is a meta skill because it improves other skills. Curious people learn faster, ask better questions, notice weak signals, experiment earlier, and connect ideas across domains.

This matters for humans, but it also matters for machines. In AI research, curiosity driven learning describes systems that explore, test, and learn from novelty or uncertainty. The parallel is not perfect, but it is useful. Both humans and machines improve when they move beyond passive reception and toward active exploration.

Costas Andriopoulos makes a similar argument in Purposeful Curiosity. The book is not about random curiosity. It is about channelling curiosity toward meaningful questions, goals, and action.

That distinction matters. Curiosity is not scrolling. Curiosity is not consuming endless information. Curiosity becomes powerful when it is purposeful.

It asks:

What is really going on here?

Why does this matter now?

What am I missing?

What would a beginner ask?

What would an expert challenge?

What happens if I combine this idea with something from another field?

That is where personal brands become interesting. Not when they repeat what everyone else is saying, but when they reveal a way of thinking.

The behavioural economics problem: we are not as rational as we think

The challenge is that curiosity requires mental effort. And behavioural economics reminds us that humans are not unlimited information processing machines.

We have bounded rationality. Herbert Simon’s idea was simple but profound: humans do not always optimise. We often operate with limited information, limited attention, limited time, and limited cognitive resources. Instead of finding the best possible answer, we often settle for a good enough answer.

We also suffer from cognitive overload. When there is too much information, too many options, and too many decisions, we become tired. We look for shortcuts. We simplify. We satisfice. That is deeply relevant to AI.

Many people do not use AI poorly because they are lazy. They use it poorly because the human mind naturally seeks cognitive relief. A shallow prompt is easier than a deep one. Accepting the first answer is easier than challenging it. Asking AI to “write something” is easier than asking it to interrogate the problem, compare options, search for missing angles, or act as a critical thinking partner.

In my behavioural economics workshops, this pattern is clear: people have limited cognitive resources, stress and decision fatigue deplete those resources, and satisficing becomes a practical response to complexity.

AI can either reinforce this weakness or compensate for it.

Used badly, AI gives us faster shortcuts.

Used well, AI gives us better thinking scaffolds.

The difference is awareness.

If you know you are prone to cognitive overload, you can use AI to slow yourself down. You can ask it to challenge your assumptions. You can ask it to compare alternatives. You can ask it to identify what you have not considered. You can ask it to act as a research assistant before it acts as a writer.

That is where the real advantage begins.

Two engines of sustainable personal brand

A sustainable personal brand in the age of AI needs two engines: agency and tech savviness. Curiosity is what connects them.

In my presentation, I described agency as internal motivation, the capacity to act according to your own compass, and tech savviness as openness to trying new tools. Curiosity is the force that keeps both alive.

Agency gives you direction.

Tech savviness gives you experimentation.

Curiosity binds them together.

Without agency, you wait to be told what to learn.

Without tech savviness, you avoid the tools that could extend your capability.

Without curiosity, both engines eventually stop.

Engine 1: Agency

Agency means taking control of your own learning. Instead of merely reacting to the external world, you build your own learning system.

This is closely connected to self determination theory, which emphasises three psychological needs: autonomy, competence, and relatedness. Autonomy means feeling that you are choosing rather than being pushed. Competence means feeling that you are improving. Relatedness means feeling connected to other people and meaningful communities.

In practical terms, agency means saying:

I want to learn this because it matters to where I am going.

I will set my own targets.

I will not wait until someone designs the perfect training programme for me.

I will try, fail, reflect, and improve.

This is not just motivational language. It matters for technology adoption.

In our GIST Do It study on smart wearables, we found that autonomous motivation did not simply create habits directly. It worked through technology features and then helped shape habitual use. In other words, tools alone were not enough. Gamification, tracking, sharing, and instructions only mattered when they connected with internal motivation. The study involved around 600 participants and showed that when motivation does not come from within, people eventually stop using even useful technologies.

That finding has direct relevance for AI.

Everyone can sign up for a new tool. Far fewer people build a learning habit around it.

Everyone can try ChatGPT once. Far fewer people use it every week to question their assumptions, improve their work, and build new capabilities.

Agency turns access into practice.

How do you build agency?

A few weeks ago, when I presented this idea, someone asked me a very practical question: “How do we get more agency?”

The simple answer is: set targets.

That sounds almost too obvious, but it is powerful. Agency needs direction. Without goals, curiosity becomes scattered. With goals, curiosity becomes cumulative.

Set short term, medium term, and long term learning objectives.

For example:

This week, I will test one new AI tool.

This month, I will create one piece of content using my own voice and AI support.

This quarter, I will build a repeatable workflow for research, writing, and publishing.

This year, I will become known for one clear area of expertise.

Targets create motion. Motion creates feedback. Feedback creates competence. Competence creates motivation. This is the snowball effect of learning.

Engine 2: Tech savviness

Tech savviness is often misunderstood. It does not mean being an engineer. It does not mean being able to code. It does not mean knowing every technical detail behind machine learning.

Tech savviness is the willingness to try new technologies.

When a new tool appears, the tech savvy person does not immediately say, “I cannot be bothered.” They ask, “What can this do? What is it good for? How could this help my work? Where does it fail?”

This attitude matters because AI tools are changing quickly. New features appear weekly. Search changes. Writing tools change. Image tools change. Presentation tools change. Automation tools change. If you wait until everything is certain, you are already late.

This is where Everett Rogers’ diffusion of innovations theory is useful. Innovation adoption is not only about technology. Rogers’ work famously grew from studying how new ideas spread, including agricultural innovations such as the adoption of new farming practices. The broader lesson is that innovation is about openness to new ways of doing things, not just fascination with gadgets.

Our own recent research on generative AI adoption in higher education supports this point. In Generative AI in Higher Education Assessments: Examining Risk and Tech Savviness on Student’s Adoption, my coauthors and I studied 353 students and 17 interviews. We found that perceived risk was a barrier, while trust and tech savviness shaped engagement. We also found that tech savviness influenced actual use and moderated the relationship between performance expectancy and AI use.

The implication is important: people who are more comfortable exploring technology do not just use AI more. They understand its possibilities and limitations differently.

They are less likely to wait for perfect certainty.

They learn by trying. And in a fast moving environment, that difference compounds.

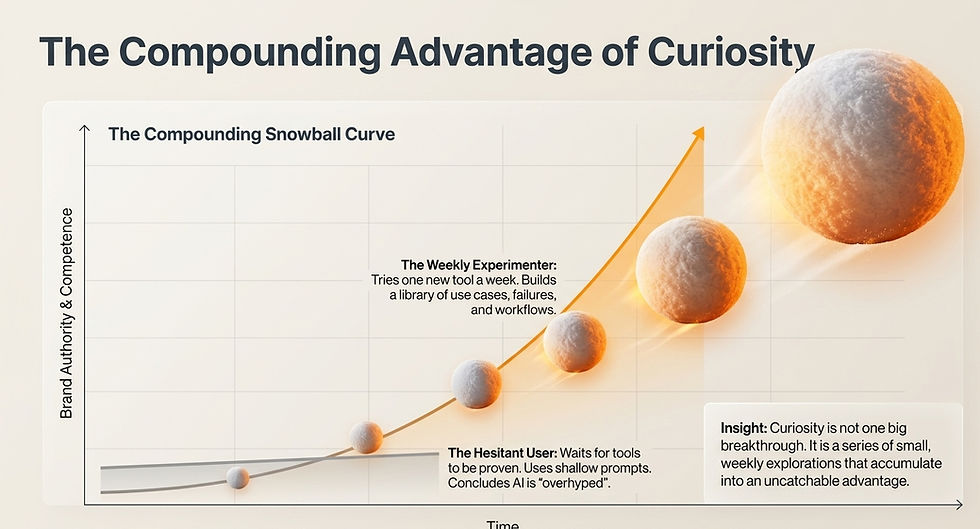

The compounding advantage of curiosity

Imagine two professionals.

One tries a new AI tool every week. They test NotebookLM, Gamma, Claude, ChatGPT projects, Perplexity, image generation tools, automation tools, and AI search. They do not master everything, but they learn enough to understand what each tool changes.

The other person waits. They assume the tools are overhyped. They use AI occasionally, usually with shallow prompts, and conclude that the output is average.

After one week, the difference is small.

After one year, the difference is enormous.

The first person has built a library of use cases, failures, prompts, examples, workflows, and intuitions. They have learned what to trust, what to check, and when to use which tool. They have also developed a stronger personal voice because they have repeatedly turned learning into output.

This is why curiosity compounds.

It is not one big breakthrough. It is a series of small explorations that accumulate into judgement.

The new brand question: how do you think?

If everyone has access to similar tools, then tools are not the differentiator. Thinking is.

Your personal brand is increasingly shaped by:

How you frame problems.

What questions you ask.

What examples you notice.

How deeply you explore.

How you connect ideas.

What you refuse to oversimplify.

How recognisable your voice is.

This is where AI actually raises the bar. In the past, weak thinking could hide behind polished writing. Now polished writing is cheap. The reader, the platform, and the algorithm increasingly look for something else: usefulness, originality, trust, experience, and perspective.

Google’s AI Overviews are designed to provide AI generated snapshots with links that allow users to dig deeper. This means content increasingly competes not only with other articles, but also with AI mediated summaries of the web.

So the question becomes: why should anyone click through to you?

The answer cannot be “because I produced content.”

The answer has to be “because I think in a way that is worth following.”

Practical curiosity habits for the AI age

Curiosity becomes a brand asset only when it becomes a habit. Here is a simple loop:

Be curious. Try something. Learn from it. Write about it. Share it. Teach it. Become more curious.

This loop turns passive consumption into active production. It also helps you avoid the trap of becoming a content repeater. You are not just summarising what others have said. You are documenting what you are learning, what you are testing, and what you now believe.

A practical weekly routine could look like this:

Choose one AI tool, feature, or workflow.

Test it on a real task.

Ask what it does well and where it fails.

Write a short reflection in your own voice.

Share one useful lesson with your audience.

Save the workflow for future use.

Repeat.

This sounds simple, but most people will not do it consistently. That is precisely why it works.

Curiosity wins because it keeps the human in the loop

There is a dangerous way to use AI: handing over the thinking.

There is a powerful way to use AI: staying deeply involved in the thinking.

The best AI users do not disappear from the process. They become more present. They ask sharper questions. They provide richer context. They challenge outputs. They refine. They compare. They add stories. They inject judgement. They decide what is worth saying.

That is the future of personal branding.

Not louder.

Not more automated.

More curious.

The winning brands in the age of AI will not be the ones that produce the most content. They will be the ones that combine human curiosity, domain expertise, technological openness, and a recognisable voice.

AI can help you write.

Curiosity helps you have something worth saying.

If you read it all the way here you deserved to get the full presentation. The original was in Turkish so I created one on NotebookLM which looks fine in my opinion.

Comments